Numlock Awards Supplement: Why Count Critics?

The Numlock Awards Supplement is your one-stop awards season update. You’ll get two editions per week, one from Not Her Again’s Michael Domanico breaking down an individual Oscar contender or campaigner and taking you behind the storylines, and the other from Walt Hickey looking at the numerical analysis of the Oscars and the quest to predict them. Look for it in your inbox on Saturday and Sunday mornings. Today’s edition comes from Walter.

If there’s been a single takeaway of the analytics revolution in sports and politics and what have you, it’s that using numbers to draw conclusions is nice, but you have to make sure you’re counting the right numbers first. Turn to any coffee table book about numbers and you’ll find, like, a dozen anecdotes about this notion.

The gist is you want to make sure you’re drawing conclusions from indicators that are actually linked to the eventual outcome, not just indicators that have a reputation for doing so.

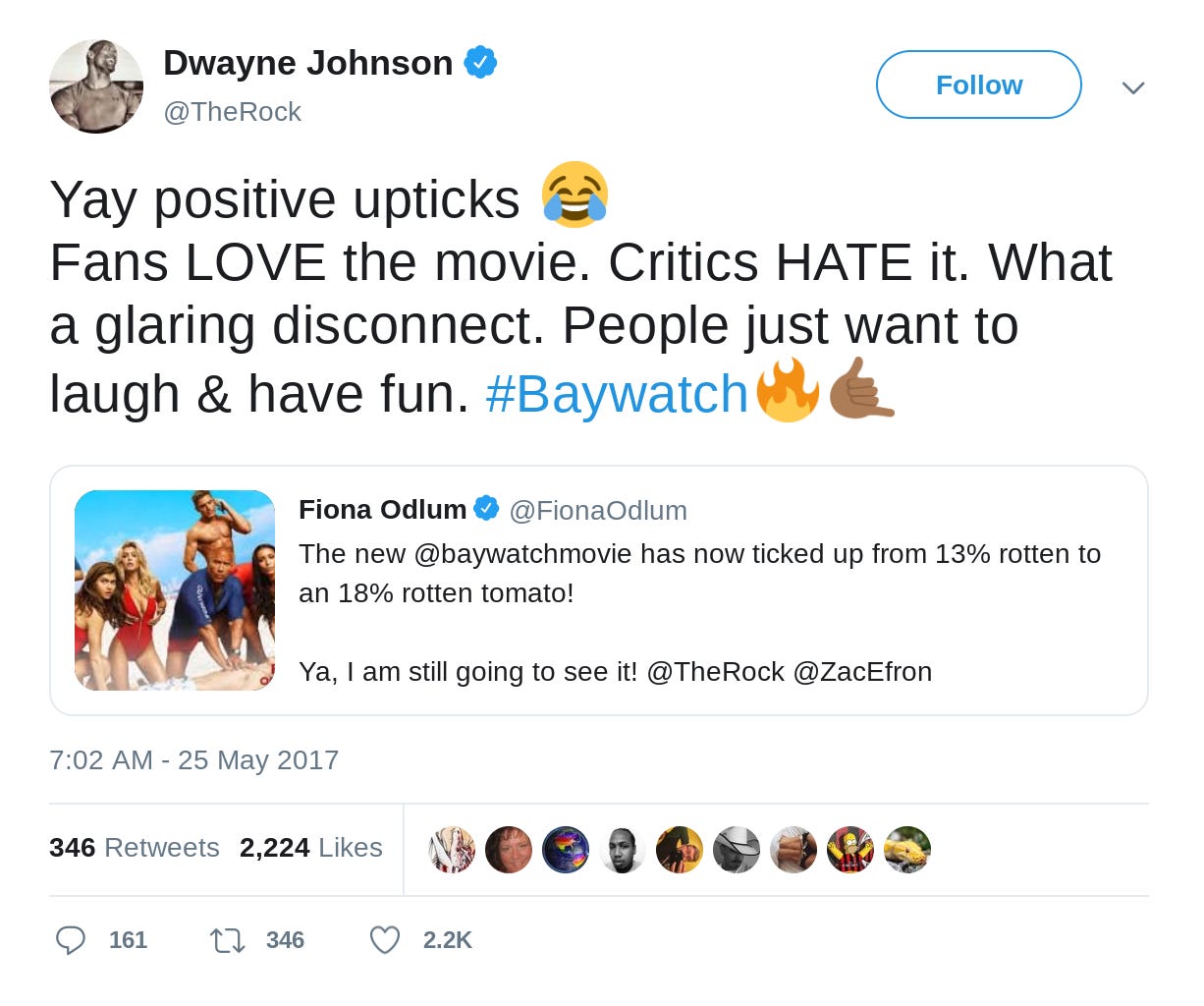

Which is why a key metric that I’m tweaking in an Oscar forecast model is the critical component. Critics I feel are in an unappealing position and deserve far more credit than they get. They get chewed out by fans who carry far too many chips on their shoulder, by creators who (understandably) take the consideration of their work personally, by the companies and marketers who resent expert product reviews, and by the aggregation websites that boil the flavor and nuance out of their work and grind an 800-word analysis into a binary vote. Then come December, when they get to weigh in on the finest their field has to offer, and humbly extend a modest prize to a creator who made an aspirational product, a bunch of degenerates like me get to try to figure out what that means for some real prize.

Therein lies a key tension at the core of the early part of the Oscar cycle. Namely, while critics on the whole care about the Oscars, it’s not their damned job to predict them. It’s not their job to appease a fanbase or flatter a creator or sell a ticket or move some Fandango-owned needle a half percentage point either. It’s their job to analyze a new piece of culture and talk about where it exists within the broader culture. They didn’t sign up for the rest of this.

The main point I’m driving at here is that critic groups are often read the wrong way by far. When I say that that New York Film Critics Circle hasn’t picked the Oscar winner for Best Picture since The Artist in 2011, it’s not a diss. It’s just an argument that perhaps the New York Film Critic Circle maybe shouldn’t be in my model, because they’re not trying to predict the Oscars and thus are not doing a very good job of it.

I propose we leave the ones that actively don’t want to predict the Oscars out of it.

But the rest of them? Critics do form a supremely necessary party of the cycle, and I really want to capture that! So how are we going to do that?

The model I managed at FiveThirtyEight relied on only a few critics awards: the Critics Choice Award from the Broadcast Film Critics Association, the New York, Los Angeles, and Chicago film critic groups, the Golden Globes and the Satellites. The Globes are their own boozy maelstrom of retail campaigning, and the Satellites aren’t until like the week before the Oscars, so they’re barely a leading indicator to begin with.

There are lots and lots of film events and awards. Well into the hundreds. I pulled, from that list on IMDb, every award with ‘critic’ in the name. There are 88 of those. Lots aren’t even to try to play the Best Picture game though, so I pulled the 35 of them that awarded their top prize to the film that also won Best Picture at the Oscars at least once in the past 10 years.

There are 13 awards that aligned with the Oscar winner five or more times in the past 10 years. Those awards are, conceivably, ones to really look to for insight on the state of the Oscar race. For one reason or another, their voters at least act like Academy voters.

The Los Angeles and New York film critics groups — each in the FiveThirtyEight model — are not one of those thirteen. The Critics Choice is, and they’re really good at it. So is Chicago, but they’re so-so. Perhaps giving each of those awards an automatic inclusion in the roster isn’t actually helping us get better at predicting the Oscar winner.

Some years, the critics totally miss the Oscar winner. The Shape of Water, Birdman, and The King’s Speech were all awarded by less than a third of critics groups. Other years they basically nail it: Spotlight and 12 Years a Slave won 92 percent and 80 percent of those critics groups, respectively.

The rest of the past decade, about half of critics groups give it to the eventual Best Picture winner. Which if you think about it, that is really quite good: There are 347 feature films that are eligible to win Best Picture this year, and in a decent year half of the critics groups will find a way to route their top prize to the eventual winner.

And that is how we need to think about critic groups. Their voters are on the receiving end of the same campaigning that Oscar voters are. They get the same screeners and read the same stories and so on. Sometimes local critic groups have an independent streak that typically means they highlight films the Academy won’t, like New York’s Critics or the Dorian Awards, which I vote on and are given by GALECA: The Society of LGBTQ Entertainment Critics. But overall, they’re worth looking at in aggregate, and with the recognition that nobody is trying to be “right,” they’re trying to honor good work.

But some critics circles do end up aligning with the Academy, and rather than arbitrarily choose from a few major metropolitan areas to keep as permanent members in the new model, I’m designing relegation. Critics that have been really good in the past five years — the Dallas-Ft. Worth Film Critics Association is 5-for-5, and the Phoenix Critics Circle (8-for-10), Phoenix Film Critics Society Awards (4-for-5), and Critics Choice Awards (7-for-10) aren’t too shabby either — should be in the model in some capacity.

But mainly, critics set the tempo for the race, and flag worthy candidates for consideration. So who’s up this year?

Of those 35 groups, 25 have awarded a best film winner. The favorite film of critics this year is Roma, which has won 12* of those prizes. Two other films have multiple wins, The Favourite (2 wins in Kansas City and Phoenix) and A Star Is Born (2 wins in St. Louis and Dallas-Ft. Worth).

So is Roma the film to beat? I’m going to be particularly interested in the Critics Choice Awards, they’re a national group with over 300 voters (nearly four times the number of Golden Globe voters!) and a Roma win would pretty much give it a sweep of the major metropolitan prizes.

But Dallas does like A Star is Born, and for whatever reason they’ve had the same taste as the Academy for the past six years. It sure beats the Golden Globes, which you’ll hear about next week.

*Washington D.C. FCA, San Francisco FCC, Southeastern FCA, Kansas City FCC, Los Angeles FCA, Las Vegas FCS, Vancouver FCC, New York Online FC, New York FCC, Chicago FCA, Seattle FC, and Toronto FCA